launchthat

Monday.com API Caching: How We Cut Response Times by 90%

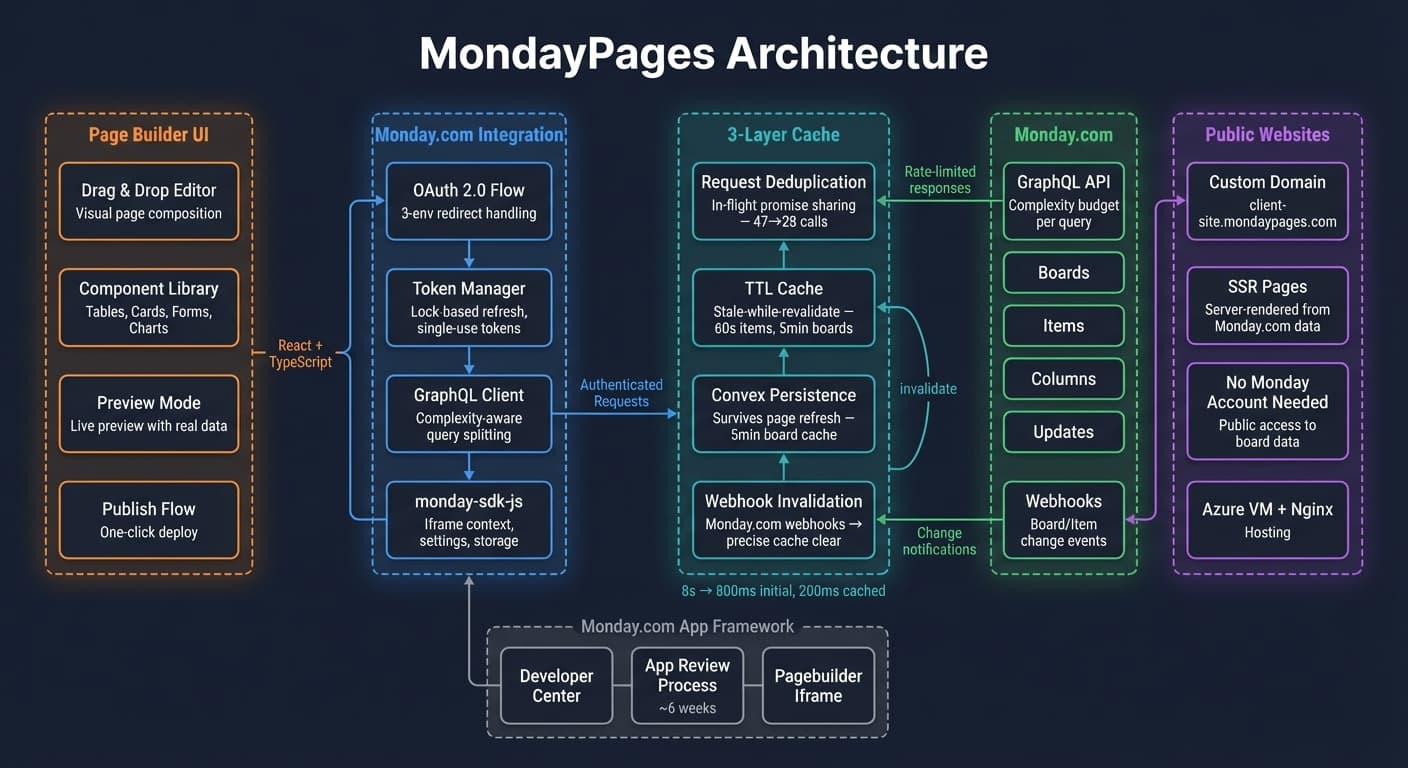

Our Monday.com plugin made 47 API calls per dashboard load. Users waited 8 seconds for data they had seen 5 minutes ago. Here is how we built a caching layer that made the integration feel instant.

The Monday.com integration was the most requested feature in our portal. Customers wanted their boards, items, and updates visible alongside CRM data without switching tabs. We built it. It worked. And it was painfully slow.

Every time a user opened the Monday.com dashboard widget, we made 47 API calls to Monday's GraphQL API. Board metadata, column definitions, item values, subitems, updates, person assignments — each one a separate request because Monday's API does not support deep nesting in a single query without hitting complexity limits.

The result: 8 seconds to render a dashboard that showed data the user had already seen 5 minutes ago. Nobody wants to wait 8 seconds for stale data.

The problem: API calls that compound

Monday.com's GraphQL API has a complexity budget. Each query consumes points based on the fields you request and the depth of nesting. Request too much in one query, and you hit the limit. So we split into smaller queries.

The split created a waterfall:

- Fetch boards (1 call) → 200ms

- Fetch columns for each board (N calls) → 800ms

- Fetch items for each board (N calls) → 1,200ms

- Fetch subitems (N calls) → 1,500ms

- Fetch updates (N calls) → 2,000ms

- Fetch person details (N calls) → 1,000ms

- Assemble and render → 300ms

Even with parallel requests within each tier, the total was consistently 6-8 seconds. And most of that data had not changed since the last load.

The solution: three-layer caching

We built a caching system with three layers, each solving a different part of the problem.

Layer 1: Request deduplication

Multiple components on the same page often need the same data. The board header needs board metadata. The item list needs board metadata. The column filters need board metadata. Without deduplication, we fetch the same board three times.

class RequestDeduplicator {

private inflight = new Map<string, Promise<unknown>>();

async fetch<T>(key: string, fetcher: () => Promise<T>): Promise<T> {

const existing = this.inflight.get(key);

if (existing) return existing as Promise<T>;

const promise = fetcher().finally(() => this.inflight.delete(key));

this.inflight.set(key, promise);

return promise;

}

}

If a request for the same resource is already in flight, we return the existing promise instead of making a duplicate call. This alone cut our API calls from 47 to 28.

Layer 2: TTL cache with stale-while-revalidate

Most Monday.com data does not change frequently. Board structures change rarely. Column definitions change weekly at most. Item values change more often, but a 60-second staleness window is acceptable for a dashboard view.

interface CacheEntry<T> {

data: T;

fetchedAt: number;

ttl: number;

}

class TtlCache {

private store = new Map<string, CacheEntry<unknown>>();

get<T>(key: string): { data: T; stale: boolean } | null {

const entry = this.store.get(key) as CacheEntry<T> | undefined;

if (!entry) return null;

const age = Date.now() - entry.fetchedAt;

if (age > entry.ttl * 2) {

this.store.delete(key);

return null;

}

return { data: entry.data, stale: age > entry.ttl };

}

set<T>(key: string, data: T, ttl: number): void {

this.store.set(key, { data, fetchedAt: Date.now(), ttl });

}

}

The stale-while-revalidate pattern is critical: return cached data immediately so the UI renders fast, then refresh in the background. The user sees data instantly and gets fresh data within seconds, without a loading spinner.

Layer 3: Convex persistence

For data that is expensive to fetch but changes infrequently — board structures, column schemas, workspace metadata — we persist to Convex:

export const getCachedBoard = query({

args: { boardId: v.string(), workspaceId: v.id("workspaces") },

returns: v.union(v.null(), boardValidator),

handler: async (ctx, { boardId, workspaceId }) => {

const cached = await ctx.db

.query("mondayBoardCache")

.withIndex("by_board_and_workspace", (q) =>

q.eq("boardId", boardId).eq("workspaceId", workspaceId)

)

.unique();

if (!cached) return null;

if (Date.now() - cached.fetchedAt > 300_000) return null;

return cached.data;

},

});

This layer survives page refreshes and browser restarts. When a user returns to the Monday.com widget, the initial render uses persisted data while fresh data loads in the background.

The results

- Initial load: 8 seconds → 800ms (cached data renders immediately)

- Subsequent loads: 8 seconds → 200ms (in-memory cache hit)

- API calls per session: 47 per load → 12 per session (deduplication + caching)

- Monday.com API quota usage: dropped 85%

The dashboard went from something users tolerated to something they preferred over Monday.com's own interface for quick status checks. That was not the goal, but it validated the approach.

What we learned

Cache at the right granularity. Caching an entire board response is wasteful — if one item changes, the whole cache invalidates. Caching individual items is expensive — too many cache entries to manage. We cache at the board-section level: items within a group, columns for a board, updates for an item.

TTLs should vary by data type. Board structure: 5 minutes. Item values: 60 seconds. User presence: 10 seconds. Using one TTL for everything either over-fetches stable data or under-caches volatile data.

Stale data is better than no data. A dashboard that shows 30-second-old data instantly is more useful than one that shows current data after an 8-second wait. Users do not need real-time accuracy for a project management dashboard — they need fast access to approximately current information.

Webhook-driven invalidation changes everything. Without webhooks, caching is a guessing game about freshness. With webhooks, caching is precise: data is fresh until told otherwise.

Want to see how this was built?

See the portal projectWant to see how this was built?

Browse all posts